Autonomous-vehicle technology is continuing to penetrate the fleet-management market. While the COVID-19 outbreak delayed the deployment of self-driving trucks, derivatives of autonomous-vehicle technology will be deployed in 2021 to continue to improve fleet and driver safety.

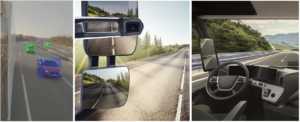

Extensive autonomous-driving research and testing confirms that the camera is still the most versatile sensor on the vehicle, and that it outperforms other sensors such as radar and lidar (laser radar). This understanding is influencing the way that fleet managers will utilize the dash-cameras they have in their fleets.

Telematics Service Providers (TSP) install in-dash cameras in commercial vehicles to collect forward looking video from the windshield. Commonly, the cameras only collect video when an abnormal event is detected by GPS sensors (for example, speeding) or the accelerometer (for example, harsh braking). The shortcoming in this approach is that these events, triggered by conventional sensors, do not tell the complete story. In order to understand the context of why the driver was speeding or braking harshly, the fleet managers have to manually review the video which is very time consuming, costly, and inefficient.

Gaining a clear understanding of the context of the event is critical in order to generate an accurate safety-score for the driver, as well as building a data driven and effective training program. A successful and accurate driver training and coaching program can make the fleet safer and reduce operating costs.

By using autonomous-driving AI (Artificial Intelligence) technology, it is possible to get an automated review of the video, thus gaining insights never available before such as:

- Road departure

- Centerline crossing

- Solid lane crossing

- Improper lane change (no signal)

- Safe following distance

- Forward collision warning

- Cyclist and Pedestrian collision warning

- Stop sign compliance

This technology is popularly known as “ADAS or Autonomous Driving Level-1”, which offers an extensive detection and warning feature set with very high accuracy levels. A quick calculation to show the importance of accuracy: while a single false alarm per vehicle per day may seem acceptable, for a fleet of 10,000 vehicles, this translates to 10 false alarms per minute. This excessive stream of false alarms will result in frustration to the drivers and a significant amount of irrelevant video data being collected and stored.

Accuracy - or as we refer to it, “detection precision”- is of the utmost importance in being able to alert the driver consistently with only accurate and relevant warnings. This ensures that the drivers view the ADAS capabilities as a valuable safety tool and not an ‘annoyance’.

In 2021, we envision three pathways that AI technology will be adopted in the fleet market:

- Cloud based perception - the simplest and fastest approach

This solution is an option if a fleet is already collecting video from the vehicles, and sending and storing this data in the cloud. The perception AI will run solely in the cloud, and will process the video uploaded by the fleet vehicles. This approach requires no modifications to the currently installed dash cameras.

- Retrofit of existing dash-cameras - the most cost-effective edge processing approach

Enhancement of existing low-power dash-cameras. Low power cameras in the existing fleet will be upgraded with AI perception software installed via OTA. The AI software will utilize the available compute power on the dash cam to provide real time alerts, and also optimize the video collection to only the relevant events.

- Cutting edge dash camera hardware - the most advanced approach

New, state of the art dash cameras with built-in ADAS by the manufacturers (either the TSP or their device manufacturer). Adding these functions at design time ensures the ADAS features have the required processing power for all of the functions, as well as enabling optimal integration with other sensors (such as Driver Monitoring System - DMS). This allows the most effective real-time detections and warnings, optimizing uploading to the cloud only the most relevant events recorded by the camera.

Brodmann17 is a leading developer of camera-AI for fleet management. Recently Brodmann17 announced the first integrations with SmarterAI, EdgeTensor and Thinkware. In 2021 some of the world’s most important TSPs will launch Brodmann17’s camera-AI, which are now in integration and testing.

Facebook Comments