The human brain is developed from a huge network of neurons. Deep learning is basically an effort to create models that mimic the function and structure of the brain.

Training Models In Deep Learning

Like any machine learning algorithm, deep learning is trained by exposing the model to many different data examples. Through this training process, the neural network begins to recognize similar objects, and the network can begin to predict what the data represents.

For instance, if we want to train a model that can recognize a car, then after each iteration we would ask the model what it thinks there is inside a digital picture. If the deep learning model gets it wrong, then we go back and adjust the neural network model until it gets to the right answer. This is repeated, with many different iterations, until the model gets everything repeatedly right.

Benefits Of Deep Learning

The amazing thing about deep learning is that whilst the data is flowing, the neural network model is going through a phase of abstraction. In the shallow layers it has blobs of colour and edges, and as you move down into the deeper layers, you begin to have pieces of object and full object paths.

Everything emerges automatically – we don’t have to tell the deep learning network what to focus on; by establishing the right set up, right labels, and right feature set, the network can develop the objects automatically. This has led deep learning to dominate any other machine learning technique.

In contrast, if you think about classical machine learning, you need to have the knowledge to teach the machine. For example, reading text requires you to teach the machine the exact text shapes.

Deep learning gives you a tool to automatically recognize features and has consequently been adopted across many different industries. A software engineer can start to develop computer vision algorithms for any application, with the deep learning algorithm doing the data acquisition automatically.

The other big advantage of deep learning is that it can ingest a very large amount of data – perhaps hundreds of millions of data examples – it has no restriction. As more and more data is added, so the deep learning algorithm will improve accuracy over time.

Comparisons To The Brain Structure

Deep learning can be compared to brain structure and function:

- Convolutional neural networks (CNN) are basically the neural network scanning through the image applying convolution operation on the image and creating meaningful features for the network to classify as objects

- Recurrent neural networks (RNN) can ingest a sequence of examples – such as text to create a set of words – or for a video with sequence of frames; the RNN is able to ingest this information and demonstrate some dynamic behavior

- Generative adversarial network (GAN) can be considered as two networks competing against each other – this can generate a training data set – such as generating synthetic images.

Types of Deep Learning & Training

- Supervised learning refers to adding a label or true answer to each example. If the network is trained to detect objects or vehicles in the automotive application, then you need to put a bounding box around the vehicle.

- Unsupervised learning is that the deep learning model will be able to identify many clusters of information – so if you have millions of medical records, the deep learning model will give you insight into the records in an automatic way.

- Reinforcement learning allows you to use trial and error models over a period of time.

The amazing thing with deep learning capability and why it has dominated machine learning for the past decade, is that it only requires a simple set of mathematical instructions to achieve significant results.

When thinking about a simple neural network, maybe a single neuron, then the only thing the neuron is doing is applying an average sum over its input. The weights of this sum are learnt in the training process. If you stack several of these neurons with non-linearity, then you have a deep neural network with some higher capability to represent your data, thus increasing the accuracy of the neural network.

Matrix Manipulation

Feedforward refers to a single pass of the neural network – it uses matrix to matrix multiplication in a single pass, applying a neural network in a staged processing environment.

If you are developing a processor for applying or implementing neural networks, it must have matrix to matrix strong multiplication. This is the main reason why people are trending to GPUs when they train and apply neural networks.

Now there is dedicated hardware to optimize data flow through the algorithm, minimizing memory, and compute requirements, and optimized for specific applications. This custom hardware is constantly developing and improving, as you see in data centers, in the cloud, and on edge devices.

In Summary

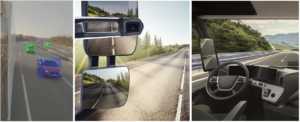

Deep learning is here to stay – we will see more and more applications, and humans will put more and more trust into the computer accuracy. In automotive applications, deep learning perception software will be used first as an enhancement to vehicle and pedestrian safety, and later to support self-driving vehicles.

If you’d like to learn more about deep learning listen to our Co-Founder & CTO, Dr Amir Alush, to learn more. Hear his series of lectures here on Elevate.

ABOUT THE AUTHOR: Dr. Amir Alush

CTO & CO-FOUNDER, BRODMANN17

Dr Amir Alush is an enthusiast for algorithms coding and design. He has many years experience in designing and implementing algorithms in the fields of Computer Vision, Machine Learning and Deep Learning working on big-data. His research for his Phd focused on Machine Learning algorithms and discrete optimization problems with applications to Computer Vision. His own personal breakthroughs in deep learning culminated in him co-founding Brodmann17 to bring uncompromising AI to the edge and everyday applications.

Facebook Comments