What is common to the latest cars, vacuum cleaners and front doors? They all have a camera looking to understand their surroundings. They are all small enough, so you barely notice them, and they all run on batteries. The technology that supports them is called machine vision, a multi-billion-dollar market that is growing fast and becoming more and more mainstream.

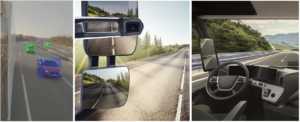

Everyday devices around us are becoming “intelligent devices”: smart door locks that recognize us and keep strangers outside, robotic vacuum cleaners that take into account who’s in the room while cleaning, and cameras all around our cars to help drivers make critical and potentially life-saving decisions.

This can all be achieved thanks to a set of Artificial Intelligence (AI) algorithms popularly known as deep-learning. For the first time there are algorithms enabling uncompromising accuracy in video understanding. Initial solutions to run these complex AI algorithms have come in the form of very high-end and AI-specific processors, and we see companies like Nvidia and Intel racing head to head to produce the most powerful AI processors in the market.

The Initial Approach: Make Larger AI Processors

While this is going on, manufacturers are struggling with the cost of adding AI to their devices due to high-end large/expensive processors that have high power consumption. As a result, the innovative universe that brings so much promise is largely detached from the ’reality universe’ that manufactures now face.

Battery-hungry processors simply do not fit many of today’s products. For example, the AI in robotic vacuum cleaners competes with the engine on the battery, in the same way that an autopilot competes with the engine on Tesla batteries - eventually reducing the car’s distance by a healthy percentage and requiring active cooling systems to operate efficiently.

In the reality universe, most products are two to three generations behind the most recent high-end products. AI-specific processors have better performance/wattage for AI applications, but their architecture and tool-chain imposes restrictions on the algorithms’ processors.

The Second Approach: Make Smaller AI Algorithms

Companies have therefore started to look more closely at software and algorithms to see if they can be better optimized and reduce the computing power to fit into commodity processors. Deep learning puts even more complexity on the embedded: not only is there a compute/memory limitation, but the tools are behind.

Companies like XNOR, for example, try to replace classical vision with deep-learning vision. They do this by using brute force to compress the algorithm to fit processors and reduce required compute power. This move to deep-learning vision however is not ideal, because accuracy and quality end up being compromised and reduced as well.

Brodmann17’s Approach: powerful Algorithms on Any Processor

Brodmann17 has found the golden path to solve these challenges – a way to create a fraction of the compute power without losing accuracy, so that the algorithm fits the standard commodity processors that manufacturers prefer.

According to the company’s CEO and Co-Founder Adi Pinhas, Brodmann17 has an innovative approach for neural network: sharing calculations and parameters (weights). By re-using calculations, we do effectively less calculations than the best and popular neural networks. The fact that we also share weights, creates a smaller model size. These two advantages: less compute and less memory, are enabling us to run on the commodity embedded platforms. No need for the crazy exotic and very expensive hardware.

Facebook Comments